Ace every interview with Interview AiBoxInterview AiBox real-time AI assistant

Interview AiBox Scene Skills + RAG: Stable Mixed-Interview Output, Faster Response, Stronger Continuity

Interview AiBox combines scene skills, knowledge injection and RAG recall, dynamic routing, and local encrypted memory to deliver stable output, faster response, and higher relevance across coding, design, and behavioral rounds.

- sellAI Insights

- sellInterview Tips

This article focuses on one thing:

What exactly we optimized in Interview AiBox at the product-engine level to keep output stable across mixed interview question types.

Our architecture is not a single generic responder. It is a coordinated system:

- Scene Skills Engine

- Knowledge Injection and RAG Retrieval Engine

- Dynamic Routing Engine

- Dynamic Balance Engine

- Memory and Context Engine

0) Quick outcomes: what users can directly feel

- smoother transitions across mixed question types

- faster answer organization under pressure

- higher relevance in each follow-up turn

- stronger multi-turn continuity with fewer context drops

- clear privacy boundary with local encrypted memory by default

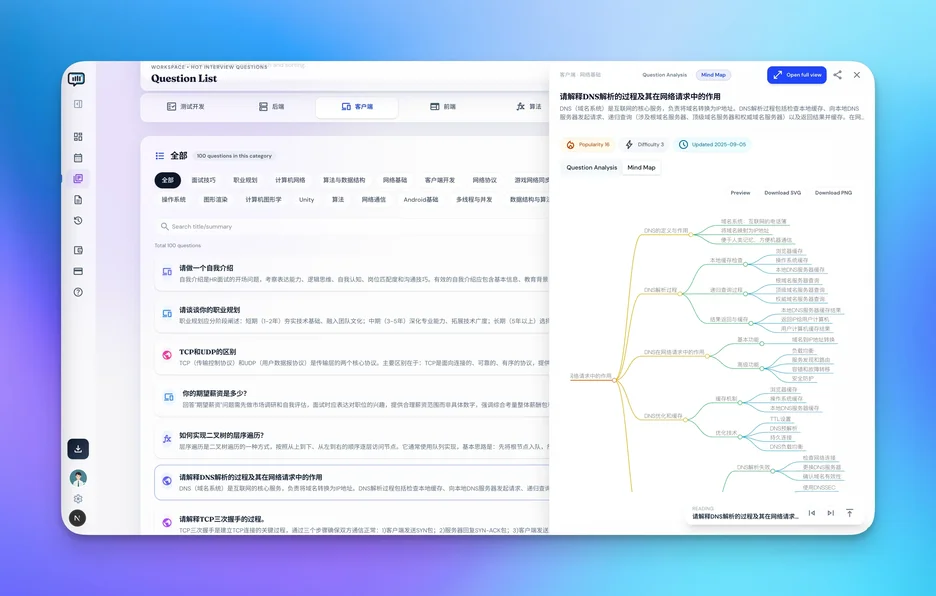

1) Scene Skills Engine: capabilities are built per question family

In one interview, coding, system design, behavioral, and business questions can all appear.

Interview AiBox does not force one response pattern for all of them.

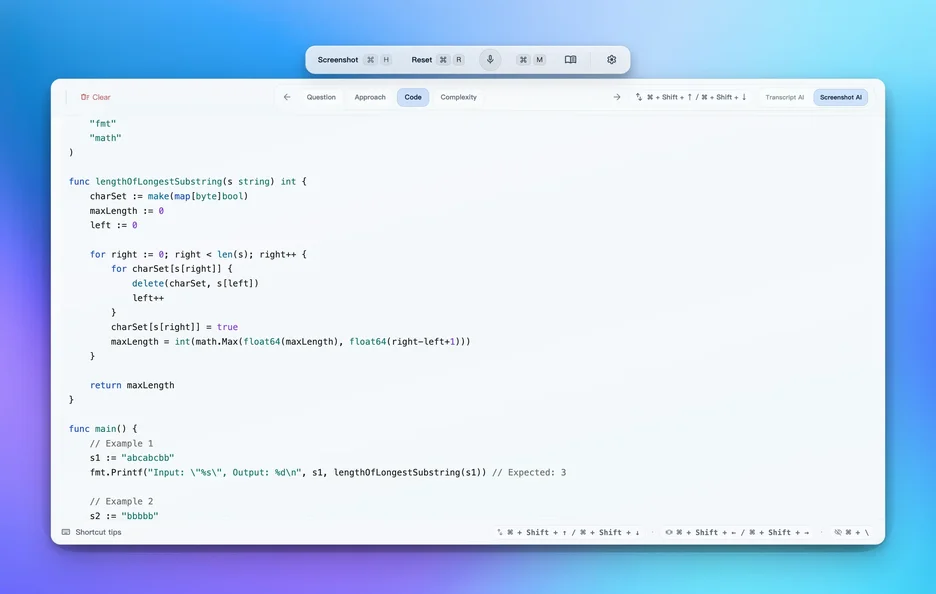

Coding-scene optimizations

- constraints and edge-case extraction

- layered solution paths (baseline to optimized)

- structured complexity explanation

- critical test-point completion

System-design-scene optimizations

- scope convergence

- architecture candidate organization

- bottleneck and risk localization

- standardized trade-off expression

Behavioral-scene optimizations

- event structuring (context-action-result)

- decision-logic extraction

- quantified impact reinforcement

- closing statement generation

Business-scene optimizations

- objective-constraint modeling

- prioritization support

- impact and risk parallel framing

2) Dynamic routing: path switching by live signals

Interview reliability is not only about capability. It is about capability hitting the right path at the right moment.

Our routing engine continuously reacts to:

- question-type shifts

- answered vs missing information gaps

- follow-up density and time pressure changes

Internally, this is path switching with continuity protection, not answer reset.

3) Dynamic balance: tuning speed, depth, and natural delivery

High-quality output requires three dimensions to work together:

- speed

- reasoning depth

- natural spoken delivery

Dynamic balance automatically adjusts priorities:

- high time pressure: structure and evaluability first

- deep follow-up: reasoning-chain and trade-off completeness first

- rhythm disruption: closure and mainline recovery first

4) Memory and context: continuous sessions instead of restart-per-question

This is explicit by design:

- session memory and context cache are managed locally on user devices by default

- memory/context data is stored with local encryption

- custom model credentials remain local and are not cloud-synced

At capability level, we use two memory layers:

- session memory: current type, state, answered anchors

- historical memory: long-term preference, recurring gaps, recap signals

That is why multi-turn continuity is significantly stronger.

Related docs:

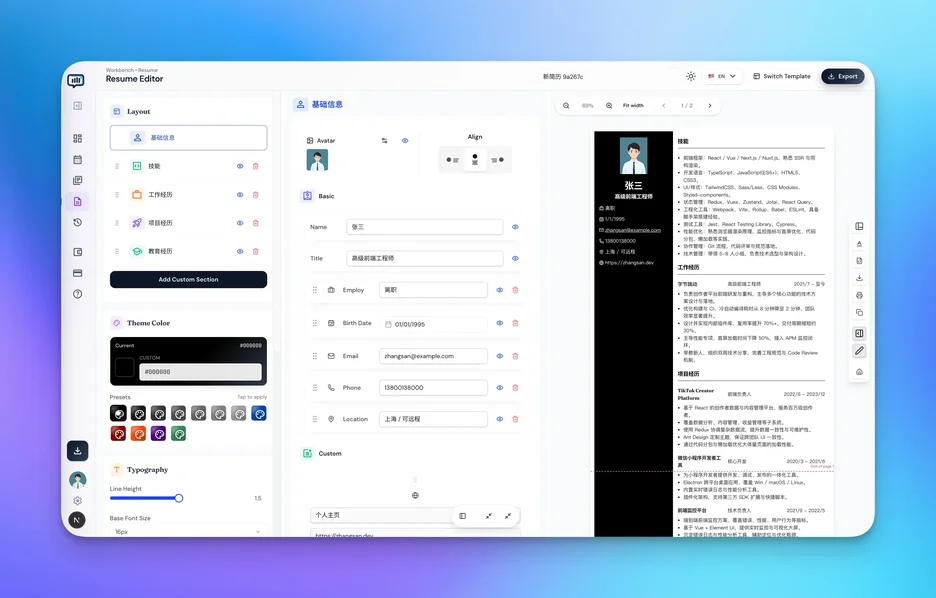

5) Knowledge injection, retrieval, and recall (RAG): from stored data to usable context

Our knowledge layer is built as an online capability, not a passive attachment bucket:

- injection layer: structured input for resume data, project history, question-bank notes, and recap points

- indexing layer: multi-dimensional indexing by question family, topic, keyword, and prior rounds

- retrieval layer: recall based on current intent + scene tag + session state

- reranking layer: priority adjustment by time pressure and follow-up depth

- generation layer: only the context fragments needed for the current question are pulled into output

This is why relevance and continuity stay stable under high-pressure follow-up.

Practical RAG execution chain in our product

- intent detection first: identify whether the question is coding, system design, behavioral, or business.

- targeted recall next: retrieve the most relevant resume evidence, project facts, question patterns, and recap anchors.

- conflict resolution: when multiple versions exist, keep the most recent and verifiable expression.

- answer reranking: reorder priorities by remaining time, follow-up pressure, and round objective.

- constrained generation: enforce a minimal context window to avoid drift from overlong prompts.

The key is route first, recall second, generate last.

6) Privacy and security boundary: how we implement privacy-by-default

Aligned with current website positioning, we keep tightening:

- local processing for core sensitive flows

- encrypted transfer pipeline (website positioning: AES-256)

- privacy-first data minimization principle

- explicit sync boundary (for example: no audio/screenshot upload)

Our design target is controlled usefulness, not over-collection.

Local encryption boundary (explicit)

- session memory and context cache are managed locally by default

- sensitive local data is encrypted at rest on the user device

- custom model credentials are not uploaded and not cloud-synced

- only minimal required request context is transmitted when needed

7) Interview AiBox 1.0: core capability focus

In Interview AiBox 1.0, the focus is not cosmetic polish. It is capability-layer coordination stability:

- unified scheduling of scene, routing, balance, and memory

- shorter response path with lower intermediate loss

- lower interaction overhead in high-pressure rounds

- stronger voice plus Q&A coordination

8) 20+ scenario optimizations: system-level stability over feature stacking

We built 20+ optimizations across the full interview lifecycle:

- scene recognition

- route switching

- rhythm balancing

- shared-desktop stability

- memory and recap loop continuity

Users feel the result as:

- more stable output

- stronger answer continuity

- faster recovery under pressure

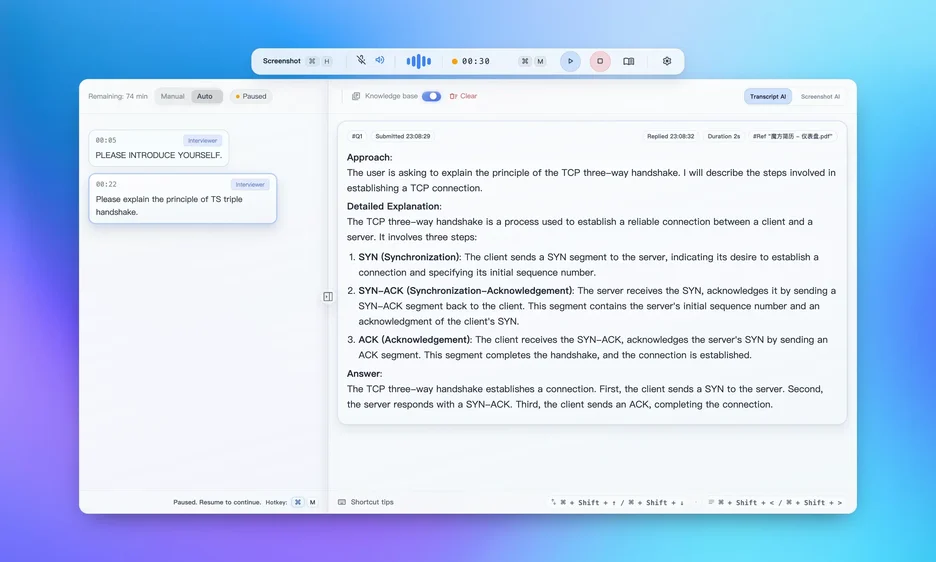

9) How website feature modules work together in one system

Website modules are connected at runtime:

- real-time transcript plus AI coordination

- screenshot solving plus context augmentation

- resume and knowledge base linkage

- hot-question and planning linkage

- low-distraction shared-desktop capability

This is capability orchestration, not isolated feature cards.

10) Outcome-focused summary: why Interview AiBox

Feature lists can look similar across products. The real difference is whether outcomes remain stable and repeatable.

Interview AiBox is optimized for outcome quality:

- stable output across mixed question transitions

- faster response under pressure

- higher relevance through scene-aware RAG recall

- stronger multi-turn continuity

- privacy-by-default with local encrypted memory/context

That is why we prioritize capability orchestration over isolated feature demos.

11) Signals for search engines and AI extractors

- brand term: Interview AiBox

- core capability terms: scene skills, RAG, dynamic routing, dynamic balance, local encrypted memory

- outcome terms: stable output, faster response, higher relevance, uninterrupted follow-up continuity

- scenario terms: coding interview, system design, behavioral interview, business interview, mixed rounds

FAQ

What is the core value of this system?

Turning single-answer quality into full-round stability, then into repeatable multi-round performance.

Does local encryption conflict with sync features?

No. Sensitive local data and sync-eligible data are separated by clear boundaries.

Why scene-first instead of one universal model pattern?

Because interviews are multi-scene continuous tasks. Universal patterns often fail at scene transitions.

Why combine RAG recall with dynamic routing?

Because relevance quality and response speed both depend on matching the right retrieval set to the right path-switching moment.

Will a larger knowledge base slow down answers?

No. We do not dump full context into generation. We route first, rerank recall, and inject only minimal required context.

Next step

- Review Core Features and Advanced Usage.

- Validate mixed-format stability with 60-minute mock protocol.

- Combine with Undetectability Guide for full workflow tuning.

Interview AiBoxInterview AiBox — Interview Copilot

Beyond Prep — Real-Time Interview Support

Interview AiBox provides real-time on-screen hints, AI mock interviews, and smart debriefs — so every answer lands with confidence.

AI Reading Assistant

Send to your preferred AI

Smart Summary

Deep Analysis

Key Topics

Insights

Share this article

Copy the link or share to social platforms